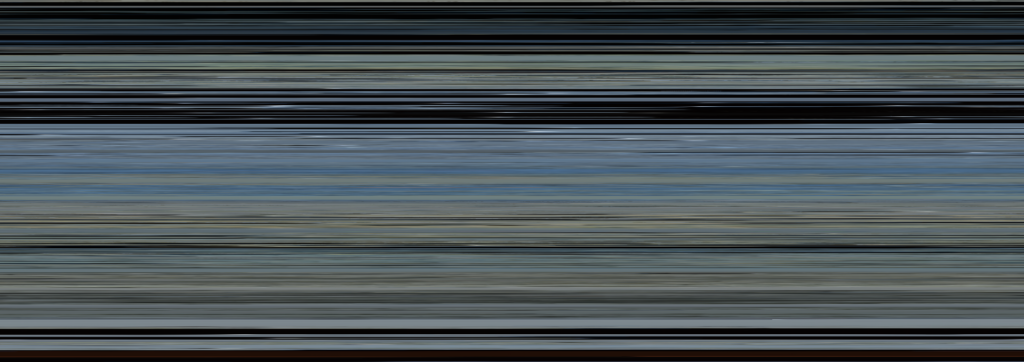

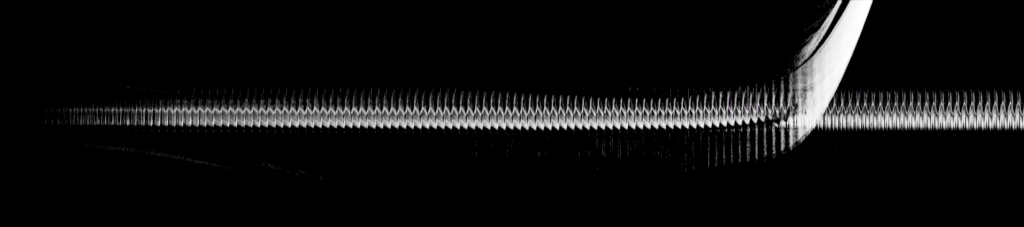

The effect of skipping frames for video visualization

I have been exploring different video visualizations as part of my annual stillstanding project. Some of these I post as part of my daily Mastodon updates, while others I only test for future publications. Most of the video visualizations and analyses are made with the Musical Gestures Toolbox for Python and structured as Jupyter Notebooks. I have been pondering whether skipping frames is a good idea. The 360-degree videos that I create visualizations from are shot at 25 fps....