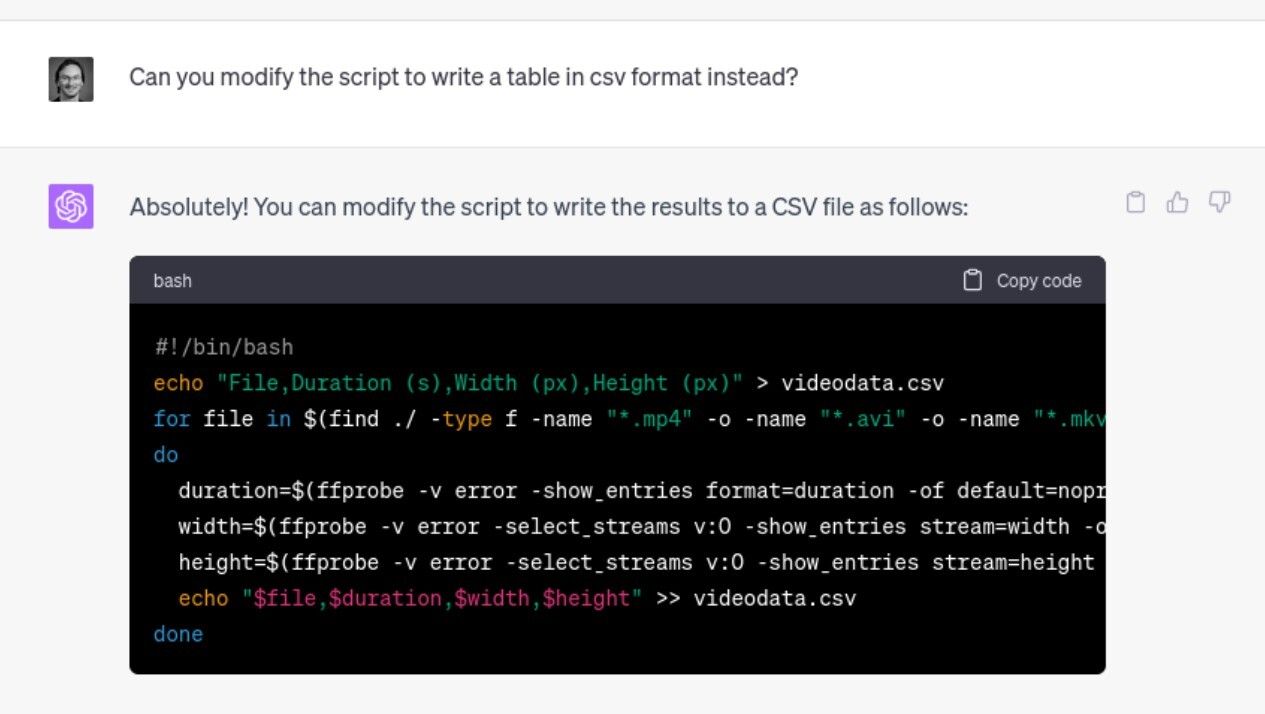

Finding duration and pixel dimensions for a bunch of video files

As part of my #StillStanding project I need to handle a lot of video files on a daily basis. Today, I wanted to check the duration and pixel dimensions of a bunch of files in different folders. As always, I turned to FFmpeg, or more specifically FFprobe, for help. However, figuring out all the details of how to get out the right information is tricky. So I decided to ask ChatGPT for help....