Try not to headbang challenge

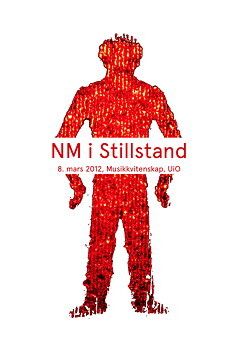

I recently came across a video of the so-called Try not to headbang challenge, where the idea is to, well, not to headbang while listening to music. This immediately caught my attention. After all, I have been researching music-related micromotion over the last years and have run the Norwegian Championship of Standstill since 2012. Here is an example of Nath & Johnny trying the challenge: https://www.youtube.com/watch?v=-I4CBsDT37I As seen in the video, they are doing ok, although they are far from sitting still....