Releasing the Musical Gestures Toolbox for Python

After several years in the making, we finally “released” the Musical Gestures Toolbox for Python at the NordicSMC Conference this week. The toolbox is a collection of modules targeted at researchers working with video recordings.

Below is a short video in which Bálint Laczkó and I briefly describe the toolbox:

https://youtu.be/tZVX\_lDFrwcAbout MGT for Python

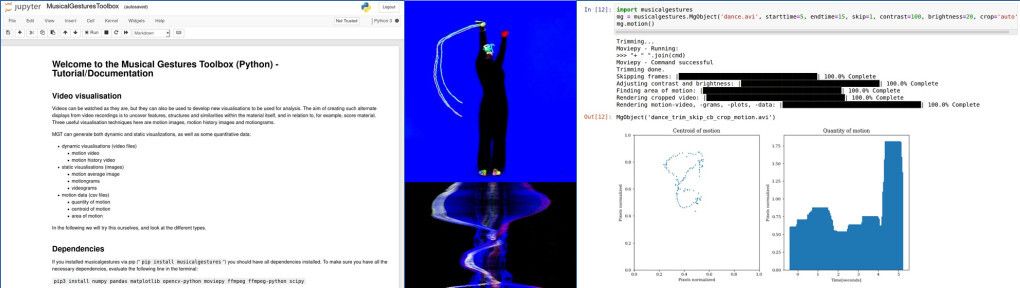

The Musical Gestures Toolbox for Python includes video visualization techniques such as creating motion videos, motion history images, and motiongrams. These visualizations allow for studying video recordings from different temporal and spatial perspectives. The toolbox also includes basic computer vision methods, and it is designed to integrate well with audio analysis toolboxes.

It is possible to run the toolbox from the terminal:

Many people would probably prefer to run it in a Jupyter notebook:

The MGT was initially developed to analyze music-related body motion (of musicians, dancers, and perceivers) but is equally helpful for other disciplines working with video recordings of humans, such as linguistics, pedagogy, psychology, and medicine.

History

This toolbox builds on the Musical Gestures Toolbox for Matlab, which again builds on the Musical Gestures Toolbox for Max. The latest version was primarily developed by Bálint Laczkó, Frida Furmyr, and Marcus Widmer.

Read more

To learn more about Musical Gestures Toolbox for Python, take a look at our paper presented at NordicSMC:

- Laczkó, B., & Jensenius, A. R. (2021). Reflections on the Development of the Musical Gestures Toolbox for Python. Proceedings of the Nordic Sound and Music Computing Conference.