Kickoff-seminar

Some pictures from the kickoff-seminar for the Sensing Music-related Actions project last week:

Project leader Rolf-Inge Godøy started with a short presentation of the new project.

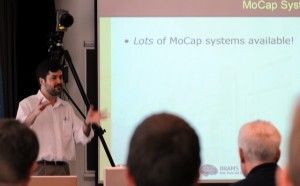

Then Marcelo M. Wanderley (McGill, Montreal) held an overview of various types of motion capture solutions, and the pros and cons of each of them. He stressed two main challenges he had had over the years: synchronisation of various types of mo-cap data with audio, video, music notation, etc., and backwards compatibility of hardware, software and data.

Ben Knapp (SARC, Queens, Belfast) followed up with a lecture on various types of biosensing solutions.

Kjell Tore Innervik testing Ben Knapp’s EEG and EOG sensor.

The seminar attracted a very mixed group of researchers, from music, composition and performance to informatics, medicine and psychology.

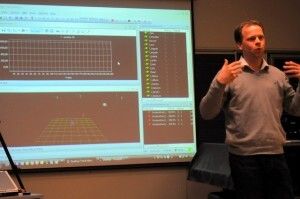

After lunch, Patrik Almström from Swedish company Qualisys, presented their optical motion capture equipment.

A very interesting feature of the Qualisys system, is that it can use both active and passive markers. It is also great that the cameras can double as regular high speed video cameras.

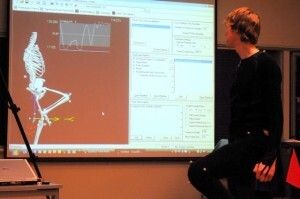

Kristian Nymoen dressed in the mo-cap suit, and demonstrated the realtime possibilities of the system.

Then we walked across campus to the new project lab.

Mats Høvin demonstrated the robot Anna, which we will train as a musical conductor in the autumn.

Mats also showed a mechanical hand with artificial muscles, something which we will also test for musical purposes in the project.

Rolf Inge, Marcelo, myself and Ben “sightseeing” in Oslo after the seminar.